I have heard something about an unordered_map having a worst case complexity of N or N2, but as far as I am aware, it usually performs all operations in O(1).

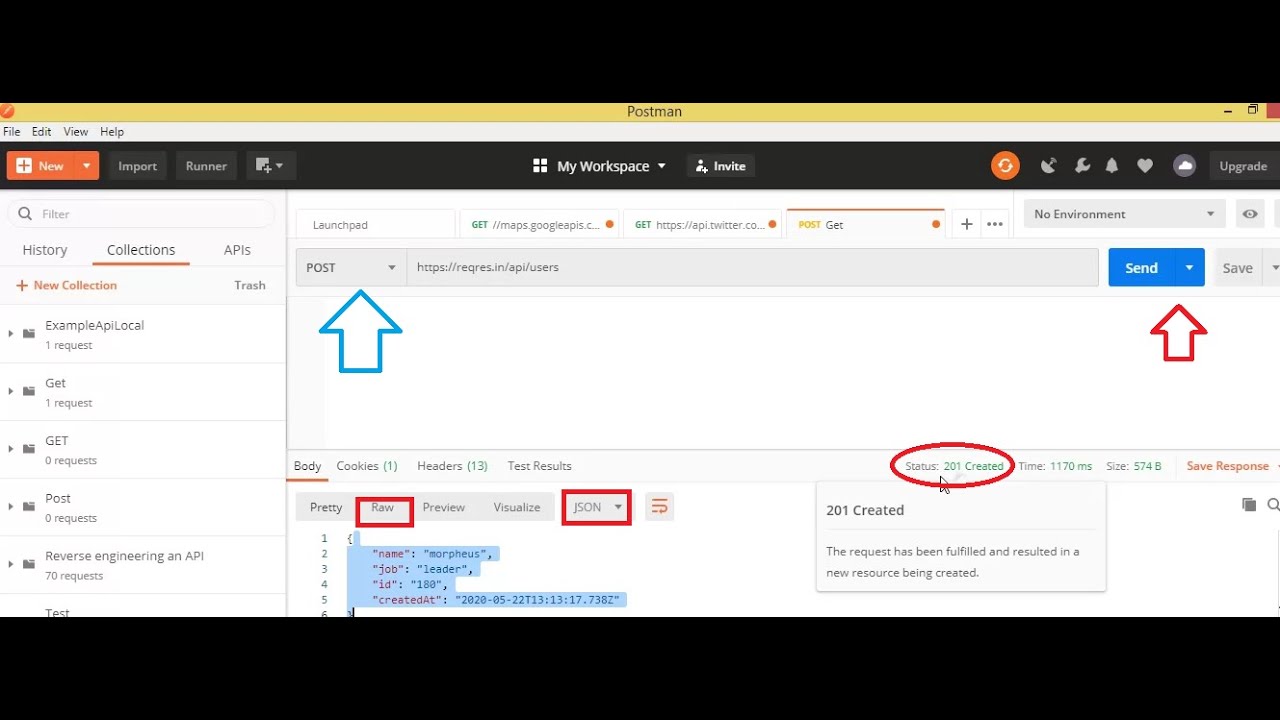

The hashmap in question is an unordered_map with a string as the key and an integer as the value. Hashmaps have a O(1) time complexity for most operations and an LRU cache requires both a hashmap and a doubly linked list. Now this was very surprising, and I had no idea why this was the case. cd Downloads/ tar -xzf Postman-linux-圆4-7.32.0.tar.gz sudo mkdir -p /opt/apps/ sudo mv Postman /opt/apps/ sudo ln -s /opt/apps/Postman/Postman. The performance dropped from 1 second to 0.006 seconds! I did a test, a recursive combinatorics problem, using a regular hashmap to save the results of outcomes during recursion (dynamic programming), and did the same with the only difference being that an LRU cache implementation (size 1024) was used instead.

I've not read up much about LRU Caching outside of what structures its made of but I am still quite surprised at how much faster it is than a regular hashmap. Ron0studios Asks: How is LRU Caching faster than a hashmap?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed